The authors introduced a hybrid spectral-conjugate gradient technique for approximate solutions to nonlinear monotone operator equations that restore damaged signals in 2021 [17].

In the same year, principle component regression had 95% prediction accuracy and Gradient Boosting classification had 100% classification accuracy. This performance was comparable to state-of-the-art models [18].

In Zharmagambetov et al. [19] another research project developed a gradient-directed line search technique for nonlinear systems of equations. This approach minimized iterations and function evaluations and showed numerical efficiency.

In the same year, empirical data demonstrated that the model outperformed Gradient boosting and AdaBoost on several benchmarks in accuracy and model size [20].

In 2021, researchers found that the boosting technique enhanced the CART model's accuracy and precision. However, the boosting approach took 2 minutes to fit the model [21].

The authors produced global convergence of the forms and gave two optimal possibilities for the Hager-Zhang conjugate gradient method's non-negative constant in the same year. Computations resolved massive monotone nonlinear equations efficiently [22].

A 2021 comparison showed that gradient-boosting-based regression outperformed random forest regression, bagging and regression trees [23].

Finally, in 2021, gradient-boosting regression outperformed other machine-learning models [24].

Artificial neural networks (ANNs) and gradient-boosted trees excelled in most instances in 2022. Gaussian process surrogates are the most commonly used surrogate modeling approach for complicated computational models [25].

Contribution of the article to related work The Gradient Boosting Algorithm is essential for scientists and engineers who must solve complex equations. This theory advances mathematics, physics and engineering research due to its high prediction accuracy and broad applicability. This study offers academics and practitioners a powerful computational tool for performing complex mathematical assignments to tackle challenging scientific problems. The theoretical overview, algorithm analysis, evaluation metrics, experimental findings and practical implementations add to a complete method for modeling and predicting complicated equation solutions.

Advantages and Limitations of the Gradient Boosting Algorithm

A potent machine learning approach called gradient boosting has significant benefits over traditional statistical models for time series data, such as ARMA. Its superior performance to these conventional models, particularly in capturing intricate patterns like holidays [26], is a noteworthy benefit. Because of this, it is a better option for working with time series data that exhibit periodic or seasonal fluctuations.

An improved classification technique that uses decision trees called the Light Gradient Boosted Machine (LightGBM) has been successful for a number of tasks. With a precision score of 93%, a recall score of 93% and a Mathew's Correlation coefficient score of 0.91 [27], it produced remarkable results in a specific application. These impressive performance figures show how well it can handle challenging categorization jobs.

Gradient Boosting's capacity to optimize sub-models in a manner that enables them to extract discriminant information complementarily and non-redundantly is one of its strengths [28]. As a result, the total prediction capacity of the algorithm is increased since each sub-model concentrates on a different component of the data.

Although Gradient Boosting performs remarkably, it is important to take into account its limits. For instance, research has shown that the Extreme Gradient Boosting (XGBoost) method, a well-known variation of Gradient Boosting, has the greatest performance results [29]. However, it could have certain negatives, such as increased time and resource demands. When choosing a suitable algorithm, it is important to carefully take the resources that are available and the application's needs into account.

In conclusion, Gradient Boosting is a useful technique that performs better than traditional statistical models in detecting intricate patterns in time series data. Effective discriminant information extraction is made possible by its sub-model optimization. The outstanding performance of the LightGBM version in certain applications demonstrates that it is especially well-suited for classification jobs. But, particularly for algorithms like XGBoost [30], it is vital to weigh their benefits against latency and resource allocation issues.

Metrics to Assess the Performance of the Algorithm

Mean Squared Error (MSE): A statistic that illustrates the average squared difference between actual and anticipated values. It measures the model's accuracy, with lower numbers indicating more excellent performance [31]

Root Mean Squared Error (RMSE): This metric, the square root of Mean Squared Error (MSE), provides a more intelligible measurement in the same units as the target variable [31,32]

Mean Absolute Error (MAE): MAE calculates the average absolute variation between the observed and predicted values. It is less susceptible to outliers than MSE [31,33]

R-squared (R2): R-squared demonstrates how much of the variation of the target variable can be predicted from the features supplied as input. Higher values suggest a better fit of the model to the data; the scale runs from 0 to 1 [34]

Explained Volatility Score: This metric calculates the proportion of the target variable's volatility that the model can explain. The model's goodness of fit can also be evaluated in this manner [35]

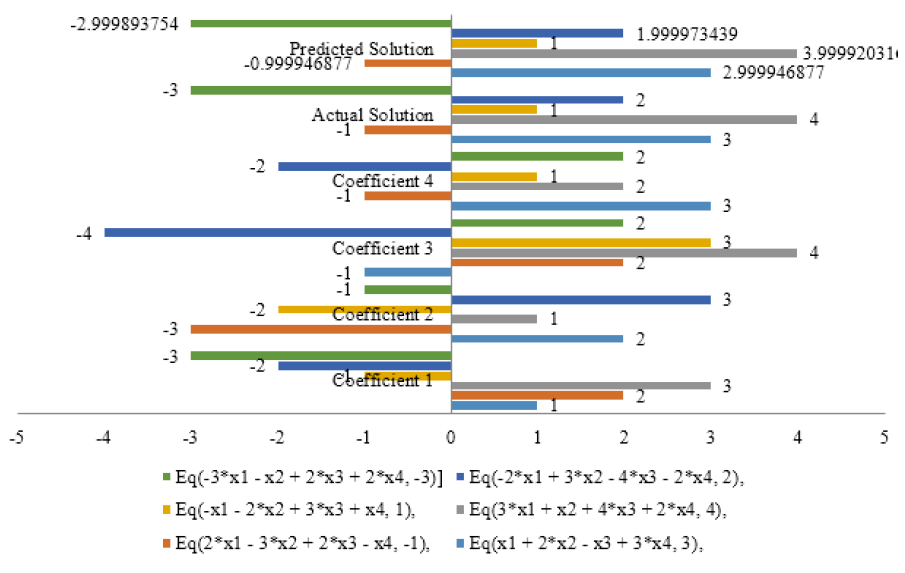

Mean Absolute Percentage Error (MAPE): MAPE determines the typical percentage difference between the actual and forecasted values. About the size of the target variable, it aids in understanding how accurate predictions are use of scikit-learn library in python to get these evaluation metrics because it has functions for computing them. Predict the answers to the equations by fitting the gradient-boosting regression model. After comparing the projected and actual solutions, utilize the evaluation metrics to determine the model's performance